Kumbaya AI: Why Singing Digital Campfire Songs with China Won’t Work (Or: How I Learned to Stop Worrying and Love the AI Arms Race)

A Response to MIT Technology Review’s Technological “Peace Plan”. The recent MIT Technology Review article “There can be no winners in a US-China AI arms race” presents itself as a voice of reason in an increasingly tense technological rivalry. However, beneath its veneer of diplomatic wisdom lies a dangerous cocktail of wishful thinking, historical amnesia, and strategic naivety that would make even the most optimistic 1990s “end of history” enthusiast blush (https://www.technologyreview.com/2025/01/21/1110269/there-can-be-no-winners-in-a-us-china-ai-arms-race/).

The False Promise of Cooperation: A Historical Perspective

The article’s central thesis – that the US and China must cooperate rather than compete on AI development – reads like a well-intentioned but misguided United Nations resolution, drafted by those who have forgotten (or never learned) how technological innovation actually occurs. One might as well have suggested in 1962 that the US and USSR should “cooperate” on their space programs for the “benefit of all humanity.” How charmingly naive that would have seemed during the Cuban Missile Crisis.

History shows unequivocally that competition, not kumbaya cooperation, drives technological advancement. The Space Race gave us unprecedented innovations not because Kennedy and Khrushchev shared their rocket blueprints over friendly games of chess, but precisely because they competed fiercely. The Internet, GPS, and numerous other technologies we now take for granted emerged from competitive pressure, not international singing circles.

The Cooperation Paradox

Perhaps most amusingly, the authors seem unaware of what might be called the “cooperation paradox” – the historical pattern where the most significant international scientific cooperation occurs AFTER, not before, periods of intense competition establish clear hierarchies and boundaries. The authors have confused cause and effect with all the certainty of someone who believes the rooster’s crow causes the sun to rise.

The Fantasy of Shared Governance

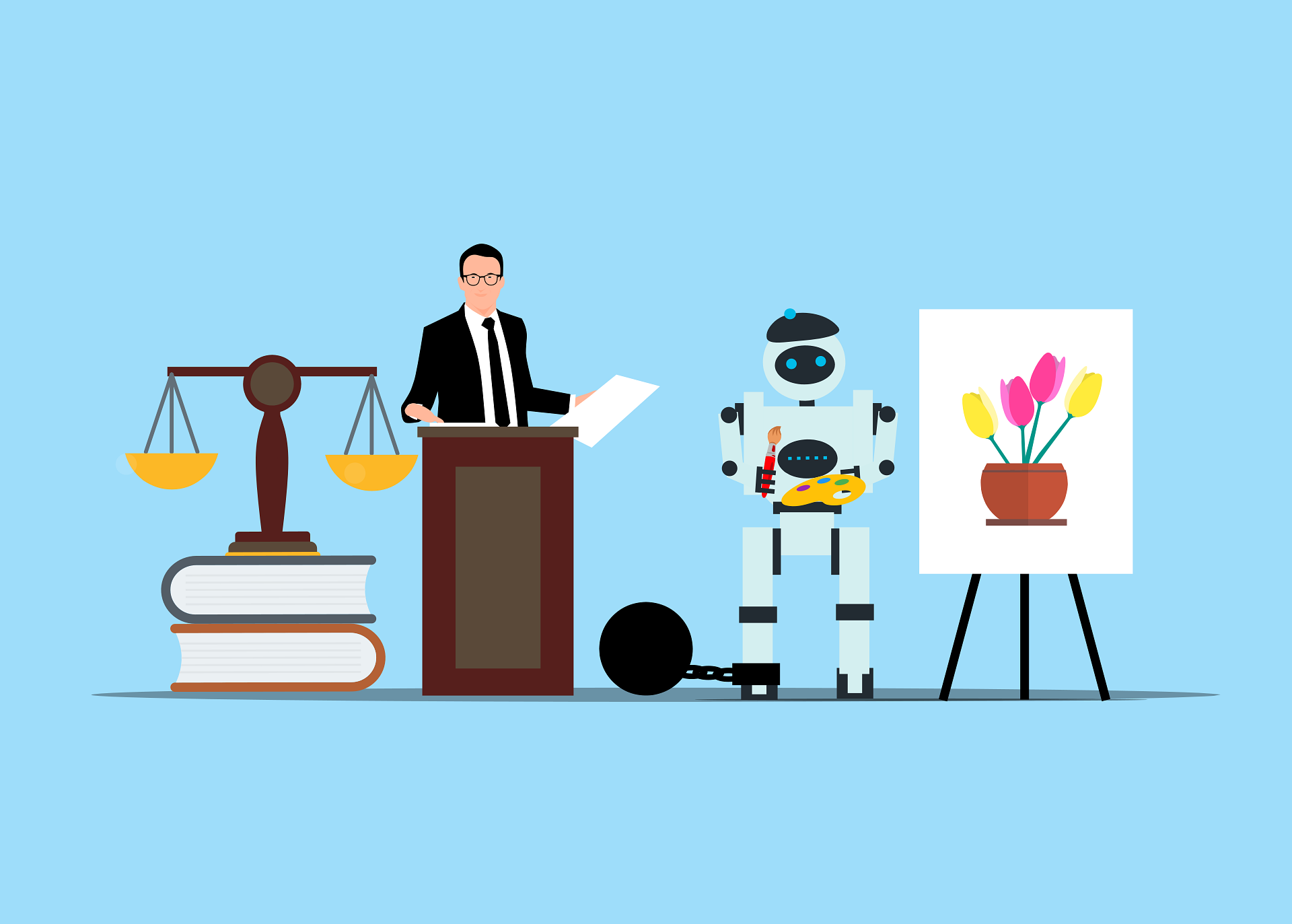

The proposal for “bilateral and multilateral AI governance” deserves special attention for its spectacular detachment from reality. One imagines the authors envision something akin to the International Atomic Energy Agency, but for AI – as if deep learning models were as easy to inspect as uranium enrichment facilities. The suggestion that we can simply agree on “ethical norms” across fundamentally different political systems would be comedic if it weren’t so dangerous.

Let us be clear: China’s vision for AI governance involves centralized control, surveillance, and social management that would make Orwell’s Big Brother seem technologically primitive. The Western approach, with its emphasis on individual privacy and rights, exists in a different moral universe. Suggesting these differences can be bridged with a few international conferences and memoranda of understanding is like suggesting that vegetarians and carnivores can resolve their differences by opening a restaurant together.

The Security Delusion

The article’s dismissal of national security concerns as mere rhetoric reveals a profound misunderstanding of both history and human nature. The authors seem to believe that by simply declaring security concerns illegitimate, they can wish away the real challenges of AI weapons development and military applications. One wonders if they would have suggested the same approach to nuclear weapons in 1945 – perhaps the Manhattan Project should have been a joint US-German initiative for the benefit of humanity?

The Technological Inevitability Myth

Another fundamental flaw in the article’s reasoning is its implicit assumption that AI development follows a single, inevitable path that all nations must traverse together. This ignores the reality that different societies may develop AI in radically different directions based on their values and objectives. The Chinese social credit system and the Western focus on individual privacy aren’t different points on the same path – they’re different paths altogether.

The Development Fallacy

The article’s assertion that developing nations view AI more positively than developed nations is both simplistic and irrelevant to the core strategic questions at hand. It’s rather like noting that people who don’t own cars are more excited about getting them than those who already have them – true, perhaps, but hardly a basis for international strategic planning.

Historical Parallels and Their Limitations

The authors’ comparison to nuclear arms control is particularly misguided. Nuclear weapons are binary – they either exist or they don’t, and their use is catastrophically obvious. AI capabilities exist on a spectrum, their development is often invisible, and their deployment can be subtle and deniable. Suggesting we can control AI development through international treaties shows a fundamental misunderstanding of both the technology and the nature of international agreements.

The Economic Dimension

Conspicuously absent from the article’s analysis is any serious consideration of the economic implications of AI development. The authors seem to believe that the profit motive and national economic interests will politely step aside in favor of international cooperation. One wonders if they’ve ever visited a stock exchange or read a corporate earnings report.

A More Realistic Path Forward

Rather than pursuing an unrealistic vision of complete cooperation, the US should:

- Maintain and expand its competitive advantage in key AI capabilities while identifying specific, limited areas where cooperation makes tactical sense

- Develop robust AI security frameworks independently rather than waiting for international consensus that will never arrive

- Strengthen alliances with democratic partners who share our values regarding AI development

- Invest heavily in domestic AI research and development rather than hoping for international collaboration to solve our challenges

- Establish clear red lines and consequences for their violation, while maintaining diplomatic channels for crisis management

- Develop resilient AI supply chains that aren’t dependent on potential adversaries.

Learning from Past Technological Revolutions

The history of technological revolution offers clear lessons that the article’s authors seem determined to ignore. The Industrial Revolution wasn’t managed by international committee. The digital revolution wasn’t guided by UN resolutions. Each technological revolution created winners and losers, shifted the global balance of power, and eventually settled into new equilibriums through competition, not cooperation.

Conclusion: Reality Over Fantasy

While the article’s authors are correct that unrestricted AI competition carries risks, their proposed solution of comprehensive cooperation belongs in the realm of fantasy rather than serious policy discussion. The path forward lies not in pretending we can eliminate competition, but in managing it intelligently while maintaining our technological edge. The stakes are too high to indulge in wishful thinking about international cooperation overcoming fundamental strategic rivalries.

The real challenge is not choosing between competition and cooperation, but determining how to compete effectively while maintaining sufficient dialogue to prevent catastrophic outcomes. This requires clear-eyed realism about both the potential and limitations of international cooperation in AI development. Anything less is not just naive – it’s dangerous.

As we stand at this technological crossroads, we would do well to remember that the road to technological irrelevance is paved with good intentions and international cooperation agreements. The choice before us is not between competition and cooperation, but between maintaining our technological sovereignty and surrendering it in the name of a cooperation that exists only in the minds of well-meaning but misguided observers.

Robert Nogacki – licensed legal counsel (radca prawny, WA-9026), Founder of Kancelaria Prawna Skarbiec.

There are lawyers who practice law. And there are those who deal with problems for which the law has no ready answer. For over twenty years, Kancelaria Skarbiec has worked at the intersection of tax law, corporate structures, and the deeply human reluctance to give the state more than the state is owed. We advise entrepreneurs from over a dozen countries – from those on the Forbes list to those whose bank account was just seized by the tax authority and who do not know what to do tomorrow morning.

One of the most frequently cited experts on tax law in Polish media – he writes for Rzeczpospolita, Dziennik Gazeta Prawna, and Parkiet not because it looks good on a résumé, but because certain things cannot be explained in a court filing and someone needs to say them out loud. Author of AI Decoding Satoshi Nakamoto: Artificial Intelligence on the Trail of Bitcoin’s Creator. Co-author of the award-winning book Bezpieczeństwo współczesnej firmy (Security of a Modern Company).

Kancelaria Skarbiec holds top positions in the tax law firm rankings of Dziennik Gazeta Prawna. Four-time winner of the European Medal, recipient of the title International Tax Planning Law Firm of the Year in Poland.

He specializes in tax disputes with fiscal authorities, international tax planning, crypto-asset regulation, and asset protection. Since 2006, he has led the WGI case – one of the longest-running criminal proceedings in the history of the Polish financial market – because there are things you do not leave half-done, even if they take two decades. He believes the law is too serious to be treated only seriously – and that the best legal advice is the kind that ensures the client never has to stand before a court.